GPT AI Data Security

Data security is the foundational prerequisite for the value of GPT applications. Enterprises must establish end-to-end protection across the "input-processing-output" workflow through measures such as data isolation, access control, and audit trails.

GPT Data Security Risks

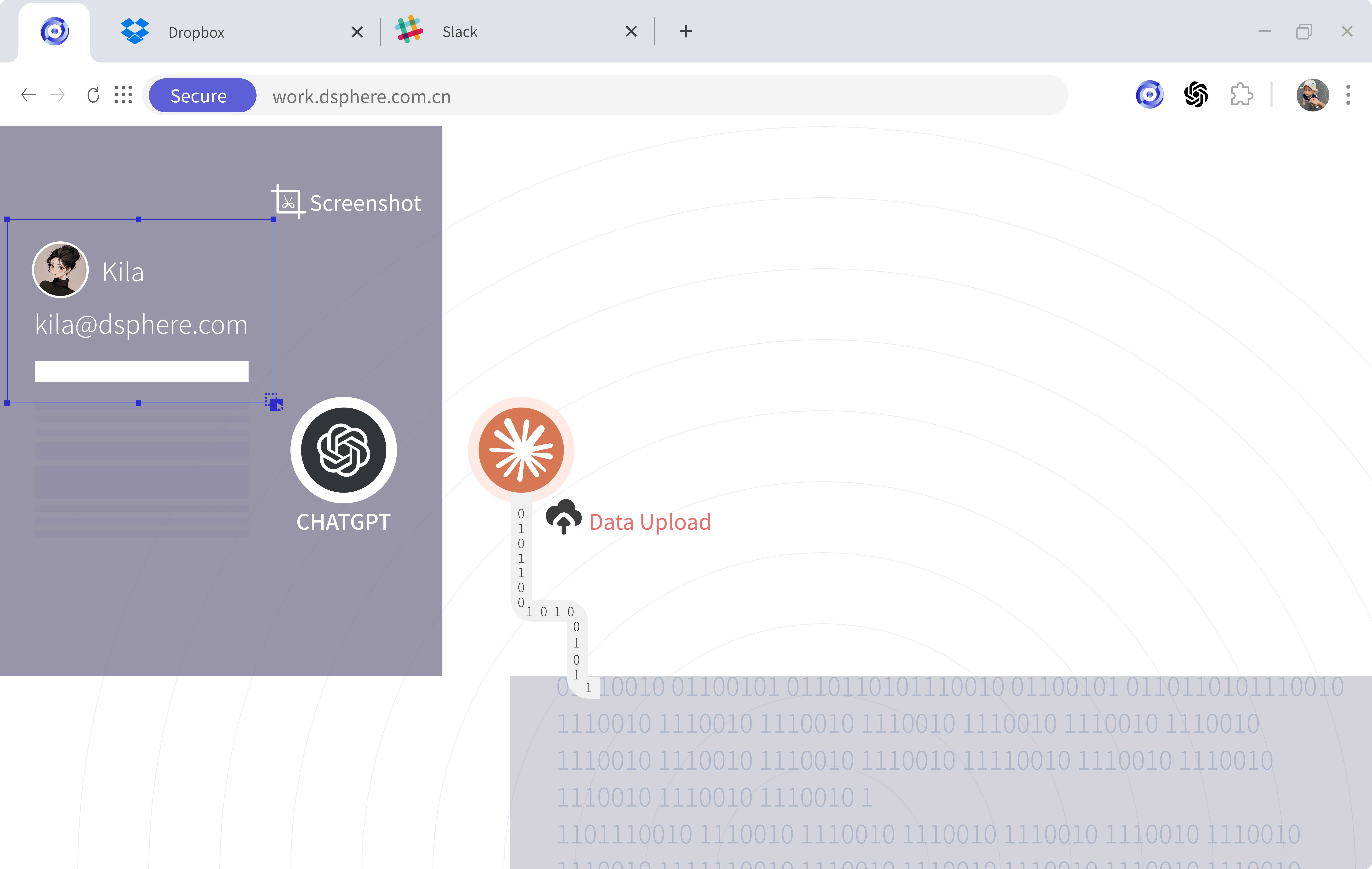

Employees using GPT-based tools face significant risks of data leakage. According to a report, approximately 25% of employees may upload sensitive data—such as company business plans, customer information, and product designs—into such AI tools.

GPT Screenshot

Prohibit GPT tools from performing automatic screenshots on sensitive business systems or system pages.

Browser API Control

Enforce fine-grained control over browser APIs to prevent GPT browser plugins from freely invoking interfaces and accessing web application page data.

Free TrialBrowser Extension

Independently manage all data permissions for browser plugins, such as screen capture, data reading, data upload, and webpage modification, and appropriately define the permission scope for GPT plugins.

Free Trial

Frequently asked questions

What problem does DSphere address for GPT-style tools at work?

Organizations worry that employees may paste or upload sensitive business data into GPT-style applications where traditional endpoint controls do not cover browser-side behavior the same way. DSphere applies enterprise browser governance to access and data flows.

Does this replace ChatGPT or block all AI tools?

This page highlights governance consistent with an enterprise browser—such as extension permissions and upload or download policy—not a claim to fully replace or block third-party AI services.

Which control areas are emphasized on this page?

The narrative includes browser extension permission management and controls around sensitive data download and upload in GPT-related workflows.

How can we try DSphere?

Use the Free Trial button on this site; it opens the DSphere account onboarding flow in a new window.

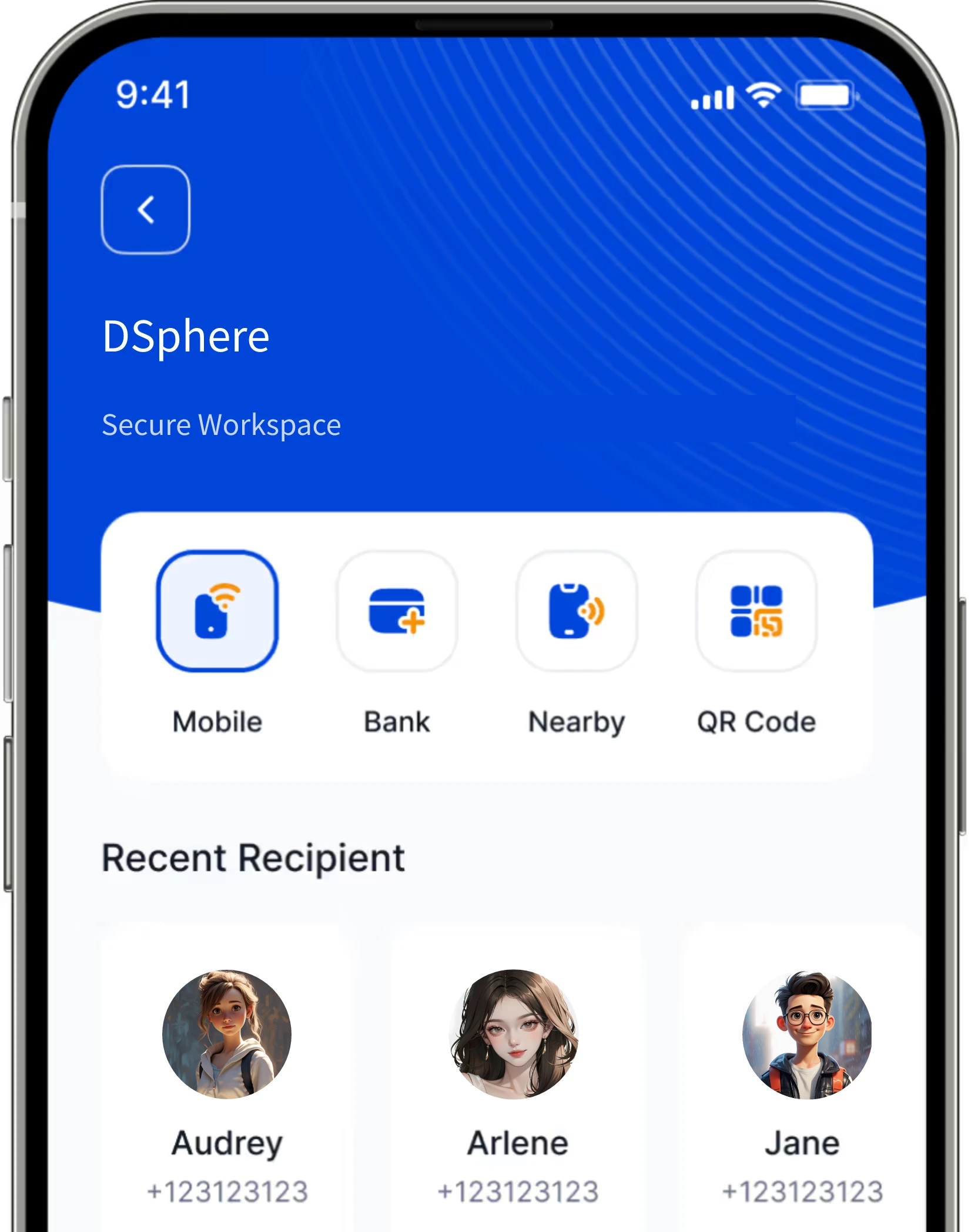

Time to Rebuild Data Security

Inside DSphere is work; outside DSphere is life. Enterprises need not worry about data leaks, and employees need not worry about their privacy being infringed.